Android App Vulnerabilities: Hidden Mobile App Security Risks

Android developers frequently use third-party libraries to enhance their apps with pre-made functionality and to benefit from the extensive ecosystem. While many popular libraries are either open source projects or built by major software companies, unfortunately, they are not immune to security vulnerabilities. For example, a recent study of Android app vulnerabilities found that third-party libraries are a major contributor to Android application security risks, with non-developer written code contributing between 60 and 95% of vulnerabilities introduced in the apps analyzed in the study.

As library code runs with the same privileges and capabilities as developer-written code, this can present unique challenges when securing an app.

Recap: Libraries on Android

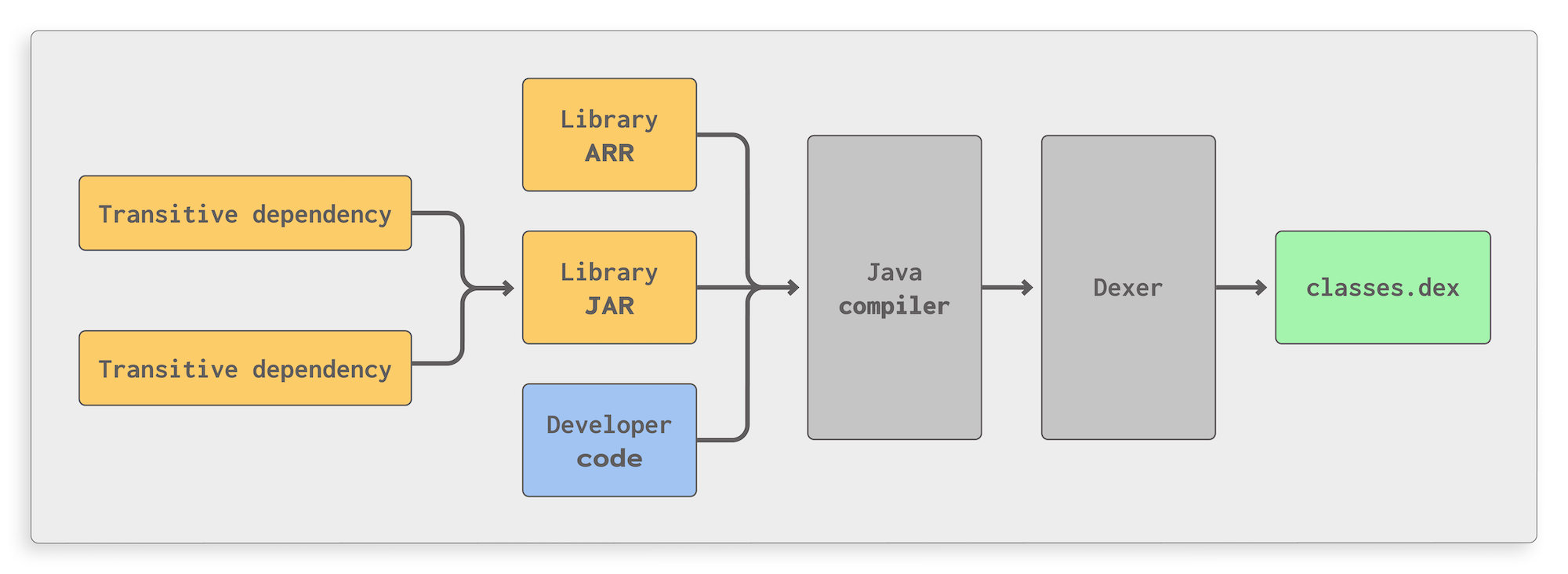

Libraries are usually added to an Android app project by specifying them as a dependency in the project’s Gradle files which will make Android Studio automatically download and integrate the libraries. In contrast to other platforms, Android libraries are always merged with the developer-written code, meaning that in the final APK file, both types of code end up in the same file and run with the same privileges and capabilities.

The figure above illustrates how this process works in a simplified manner: developer-written code as well as library code coming in the form of JAR or AAR archives are fed to the Java compiler which generates Java bytecode. This, in turn, is converted to the Dalvik format by a Dexer, resulting in a single Dex file that contains all application code. For large apps, this process might result in several Dex files which still contain mixed library and developer-written code. All of this is done by Gradle, so the process is out of the hands of the developer.

For example, this means that if an app successfully requests a permission in its core code, any library code in the app will also be able to access resources secured with that permission. By extension, this means that security issues in library code can have the same impact as issues in developer-written code. Library code can also change settings that can affect the security of the entire app.

In this post, we will discuss two examples of security issues in library code that can affect the security of the entire app, turning secure, developer-written code into insecure code by changing global settings. We will also explain how to detect and address security issues in library code to advance towards the goal of a secure mobile app.

Security issues in library code

The security impact of issues in library code can be manyfold. There are generally two categories of security issues to address in library code: the first are standard security issues that are located in library code and pose a risk when run. The second are pieces of code in library code that can potentially compromise the entire app’s security. Both require developer attention to ensure the app’s security is not compromised.

In this context, it is important to note that even if a developer didn’t explicitly add a library to the app themselves, libraries might still have dependencies of their own, which results in a potential large amount of additional library code becoming part of the app.

Global HostnameVerifiers and their impact on app communications

When establishing an SSL/TLS connection, Android uses a HostnameVerifier to check if the hostname on the server’s certificate matches the hostname that the application is trying to connect to. The correct functioning of the HostnameVerifier is therefore essential in protecting an app’s communications against Man-in-the-Middle (MitM) attacks. Read more about this topic in our blog post about TLS certificate security.

On Android, it is possible to use custom HostnameVerifiers to cover use cases like legacy servers, custom CAs, or internal use cases where deviations from the standard verification logic are needed. In addition, it is possible to set a custom verifier as the default verifier for all connections. This can also be done from library code, meaning that if a library sets a defective default HostnameVerifier, this will compromise all connections that the app makes.

The example code below is found in many third-party libraries in the wild:

HttpsURLConnection.setDefaultHostnameVerifier(new HostnameVerifier()

{

@Override

public boolean verify(String hostname, SSLSession session)

{

// Accepts any host, even if it is invalid and/or malicious

return true;

}

});

In short, this sets a default HostnameVerifier that accepts any hostname without performing any actual verification. This makes the entire app vulnerable to MitM attacks, potentially allowing attackers to eavesdrop on sensitive communications. For apps that process sensitive data such as financial or personal information, the security impact is potentially devastating.

WebView remote debugging

Another example of a global setting that affects the security of an entire application relates to a frequently-used component in Android, namely WebViews. WebViews are often used to provide in-app browsing, display remote web content, or even host entire applications for cross-platform frameworks.

// Globally enable WebView remote debugging

WebView.setWebContentsDebuggingEnabled(true);By default, WebViews do not allow for external debuggers to be attached, to protect the data that is being rendered in the view. Developers can enable debugging for WebViews, with the corresponding setting also being a global setting, which is independent from the main setting controlling whether an app can be debugged. The line of code above shows the relevant Android API method call.

If library code enables this setting, all WebViews in the app will be debuggable, even in production versions of an app. This makes it substantially easier for attackers to eavesdrop on potentially sensitive information via an attached PC and manipulate data in WebViews. For example, this makes it trivial for an attacker to cheat at mobile games using WebViews, as they have full control over any variables and the environment in which the game runs via the debugger.

Standalone security issues in library code

In addition to affecting global settings, libraries can also contain “regular” security issues in their own right. For example, AAR-based libraries can contain Android components that are susceptible to the same problems as developer-written components, such as improperly secured exported components, which can be used by attackers to run code with the app’s privileges, steal sensitive data, and the like.

Many applications obtain the location permission for their use cases. If the user grants that permission, this means that library code also has access to the location permissions. A library could then log the device’s location and send it over a potentially insecure connection, creating both a security and compliance risk even though the developer-written code might be secure.

In regions with strong data protection laws, this can also carry severe legal risks, as the app’s authors will be responsible for any leaked personal information, regardless of whether the original vulnerability is located in library code.

Finding and addressing issues in library code

The above examples make it clear that the security of library code is as important to the overall app security as that of developer-written code. This section will explain how to detect and evaluate Android app vulnerabilities in libraries using a mobile application security testing (MAST) tool.

To detect security vulnerabilities in library code, you can use a MAST tool to automatically find security issues. Since the library code is mixed with the app code in the final APK, most MAST tools for Android analyze library code just as they do developer-written code. If the tool finds security issues, it is important to evaluate each issue to check if it affects the security of the overall app. Good MAST tools should clearly indicate whether a security issue is in library or developer-written code.

Scanning the entire app in this way is also more thorough than just scanning libraries individually, as some security vulnerabilities only occur in the interaction between library and developer-written code.

Firstly, this process consists of inspecting the piece of code in question and determining whether it is actually a true positive. For example, for debuggable WebViews, there can be checks around the actual call to the relevant API method that prevent it from being called in a production app. Some MAST tools may not recognize these checks, in which case they produce a false positive.

// Only enable WebView remote debugging if the APK is in debug mode as well

if (BuildConfig.DEBUG)

{

WebView.setWebContentsDebuggingEnabled(true);

}

The code above is an example of secure handling of WebView remote debugging. It will only enable WebView debugging if the APK itself is in debug mode, which should never be the case for the release build of an app.

If the finding is a true positive, the next step is to determine if the affected code is reachable from the core app code. Since determining this statically can be difficult, this is a good opportunity to use interactive or dynamic analysis. If the relevant code is not reachable, i.e. it does not run during any conceivable run of the app, it is not a concern for the app's overall security. It is, however, very difficult to precisely determine whether a given piece of code is reachable, or will stay unreachable in the future, so removing these issues or switching libraries either to a version that doesn’t have the issue, or to a different library altogether, is recommended.

If the finding is both a true positive and reachable, it does represent a threat to the overall app security. In this case, one can contact the library’s authors to report the issue to get a fix in an upcoming version of the library. In the worst case, such as for abandoned projects, it might be necessary to switch to a different library.

How AppSweep helps

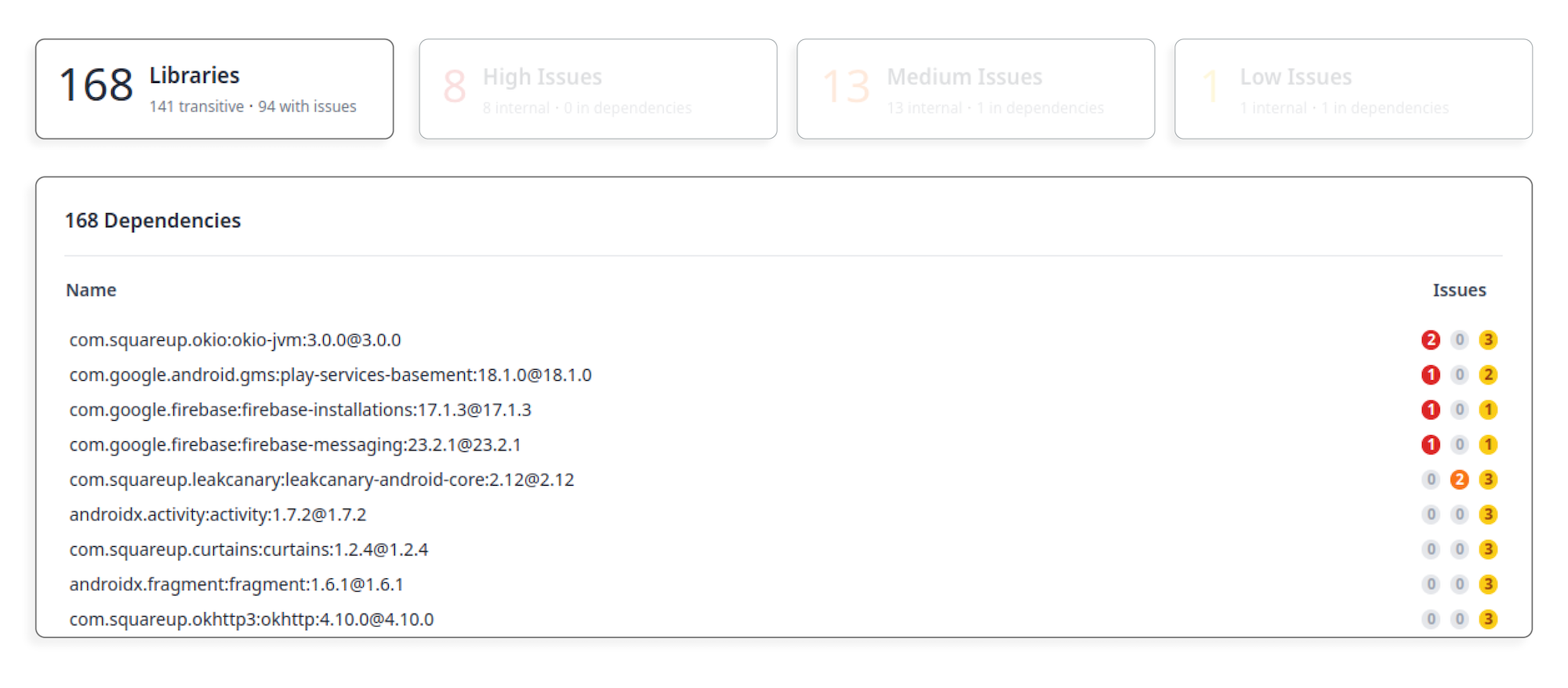

AppSweep is a free MAST tool that analyzes library code in the same way as developer-written code and provides a convenient overview of an app’s dependencies and any hidden mobile app security issues contained within.

To check your app for security issues in library code, simply upload it to AppSweep. Using the AppSweep Gradle plugin is optional; however, provides the best results for library analysis. Once the scan is completed, you can access a list of libraries and their security issues by clicking on the Libraries box, as indicated in the screenshots below:

On this page, you can click individual library names to get a list of findings for that specific library. To secure your app, follow the steps outlined above to check each security issue and mitigate them, where possible.

Conclusion

In this blog post, we have illustrated the problem of security issues in library code and their potentially devastating impact on the overall security of an app, even if the developer-written code is secure. We have also outlined how to identify and address these issues using a Mobile Application Security Testing (MAST) tool, such as AppSweep.

To minimize the risk of security issues in libraries, it is also important to regularly check for and update dependencies to your app, as well as the release notes to see if there are any security issues that need action. There are also automated tools for monitoring dependencies for known security issues and automating them, where possible, which can be a good complementary effort to scanning library code for security issues.